Is AI Taking Over? – Jamie P

Recently, whilst scrolling through my phone, I have noticed how often I am seeing AI being implemented into everyday activities; adverts for AI games, AI websites such as DALL-E and ChatGPT and the most recent addition – the Snapchat AI assistant that you can access with Snapchat Premium.

In recent years, the topic of artificial intelligence (AI) and its potential impact on society has been a source of much debate and speculation. While some experts tout the benefits of AI in improving efficiency, productivity, and innovation, others express concern over the potential risks and ethical implications of AI’s increasing dominance in various fields, so what’s happening so far?

The History of Artificial Intelligence

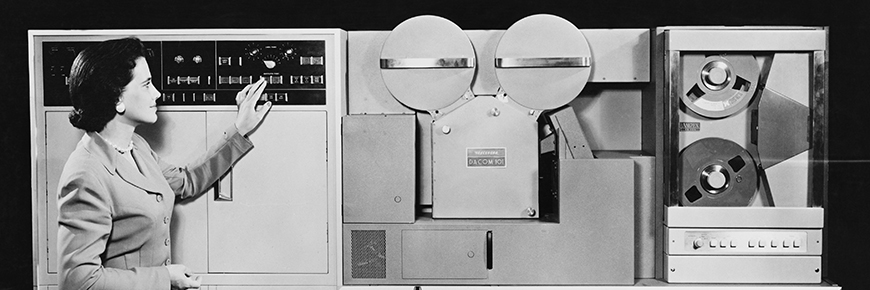

Artificial Intelligence, or AI, is a field of computer science that aims to create intelligent machines that can perform tasks that typically require human intelligence, such as learning, problem-solving, and decision-making. The idea of AI dates back to the mid-20th century when computer scientists began to explore the possibility of creating machines that could think and learn like humans.

Timeline of Development

- 1950: British mathematician Alan Turing publishes a paper titled “Computing Machinery and Intelligence”, which proposed the idea of a machine that could simulate human intelligence.

- 1956: John McCarthy, Marvin Minsky, Claude Shannon, and Nathaniel Rochester organise the Dartmouth Conference, which is considered the birth of AI as a field of study.

- 1958: John McCarthy develops the LISP programming language, which becomes the dominant language for AI research.

- 1967: The General Problem Solver (GPS) program, developed by Allen Newell and Herbert Simon, demonstrates the use of heuristic search algorithms to solve problems.

- 1970s: Expert systems, which are designed to mimic the decision-making abilities of human experts, become popular.

- 1980s: Connectionism, or the study of neural networks and their learning capabilities, gains popularity.

- 1990s: The field of machine learning emerges, with algorithms such as decision trees and artificial neural networks becoming widely used.

- 1997: IBM’s Deep Blue computer defeats world chess champion Garry Kasparov in a six-game match.

- 2000s: The development of support vector machines, ensemble methods, and deep learning algorithms leads to significant advances in AI research.

- 2011: IBM’s Watson defeats human champions on the quiz show Jeopardy! using natural language processing and machine learning techniques.

- 2012: Google’s deep neural network, known as Google Brain, achieves significant improvements in image recognition accuracy.

- 2015: AlphaGo, a computer program developed by Google’s DeepMind, defeats world champion Go player Lee Sedol in a five-game match.

- 2018: AI systems become increasingly integrated into everyday life, with applications such as virtual assistants, autonomous vehicles, and facial recognition technology becoming more common.

Pros & Cons

Pros

- Increased efficiency and productivity: AI can automate tedious and repetitive tasks, freeing up human workers to focus on higher-value work.

- Improved accuracy and precision: AI can perform tasks with a high degree of accuracy and consistency, reducing the risk of errors caused by human factors.

- Enhanced decision-making: AI can analyze vast amounts of data and provide insights and recommendations that humans may not have been able to discover.

- Increased innovation: AI can be used to develop new products and services, as well as improve existing ones.

- Improved safety: AI can be used to detect and respond to potential hazards and threats, improving safety in industries such as transportation and manufacturing.

Cons

- Job displacement: AI can automate jobs that were previously done by humans, potentially leading to job losses and disruptions in the labor market.

- Bias and discrimination: AI can be trained on biased data, which can lead to discriminatory outcomes in areas such as hiring and lending.

- Privacy concerns: AI can collect and analyze vast amounts of data, raising concerns about how that data is used and protected.

- Dependence on technology: As AI becomes more advanced and ubiquitous, there is a risk of becoming overly dependent on technology and losing critical human skills and judgment.

- Unintended consequences: AI systems can sometimes produce unintended or unexpected outcomes, which can have negative consequences for individuals and society.

The Big Question – Could AI Take Over The World?

This feels like too big of a question to place judgement on myself, so I asked no better source than the AI itself – ChatGPT.

“No, it is highly unlikely that Artificial Intelligence (AI) could take over the world. While AI has made significant progress in recent years, current AI systems are still far from having human-like intelligence or consciousness. AI systems are designed to perform specific tasks within a set of parameters and cannot act outside of those parameters without explicit programming.

Moreover, AI systems lack the creativity, empathy, and ethical judgment that humans possess. While AI can learn from data and improve its performance, it lacks the ability to generate new goals or values on its own. Therefore, it is highly unlikely that AI would be motivated to take over the world or engage in any form of hostile action without human intervention.

That said, there are potential risks associated with the development and deployment of AI, including unintended consequences, bias, and job displacement, among others. It is important for researchers, developers, and policymakers to address these risks and ensure that AI is developed and used in a responsible and ethical manner. “

So, theres your answer! Apparently AI is not going to take over the world, not anytime soon at least. Funny thing is, ChatGPT just wrote this entire Bubble article for me, how about that? Maybe now you will form a different conclusion…

Post Comment

You must be logged in to post a comment.